The WordPress SEO Audit Checklist for 2023 – With Free Tools

Table of Contents

Introduction

In the two previous articles (On-Page SEO 2021: The Beginner’s Guide For WordPress and On-Page SEO: Introduction To Search Engine Optimisation 2021) of this series, we first tried to understand how search engines analyze sites and then what elements we need to implement on our site in order to increase our chances of appearing in the results in relation to user queries. In this article, we will go through different tools that we can use in order to make an audit of your site and identify areas for improvement.

Step 1 : Page status analysis with Screaming frog

The screaming frog application is quite useful to do a status analysis of your site. There is a free and paid version. The free version has a limit of 500 URLs which can cover smaller sites. The application you download on your computer will crawl your site as a search engine bot would.

For example, in the case of a WordPress site, the bot will crawl the pages, categories, tags, images, and more. Which means that you quickly get to 500 URLs. In this free version app, you can do the following analysis:

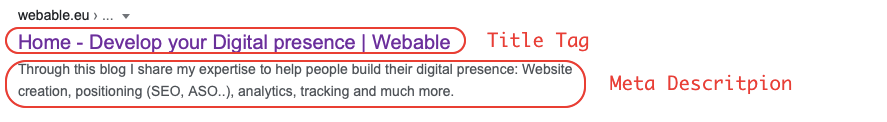

1.1 Optimize the tags on the page: title and Meta description.

We’ve gone over the meaning of these rather important tags in the article on-page SEO. If you are not familiar with these terms, I strongly advise you to read the article in question. What you need to remember for this part is that the Screaming frog application allows you to analyze your pages to see if you respect the best practices in terms of the number of characters for the title and description tags.

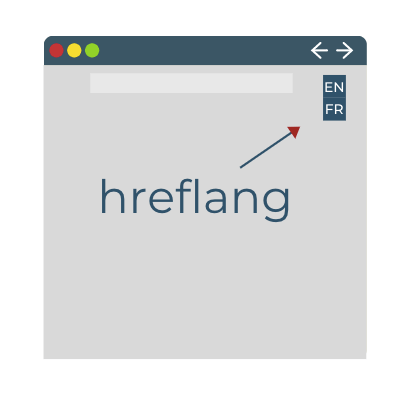

1.2 Auditing languages on the page: hreflang attributes

When you have a multilingual site, the “hreflang” attributes allow you to indicate the language of the content. I think a little more clarification is needed for this point: to display content to the user, the search engine will look at the language of the query, the language of the browser’s configuration and the search habits (there are other factors that come into play, but to simplify the point I’ll just mention three).

For example, when I type the query “SEO” into the search engine, even though I have my Google Chrome configured in English and the query is in English, I still get results in French because of my search history. So having this attribute configured correctly allows the search engine to know in which context to display a page of your site.

1.3 Discovering and extracting duplicate content

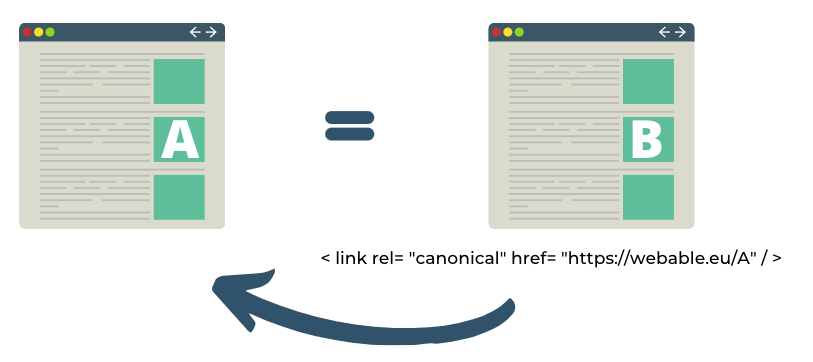

As we mentioned in the last article, it is important to avoid duplicate pages on your site. Each page should have a unique title tag and meta description. If you have to duplicate pages for site specific reasons, you should at least indicate which page is most important to you by adding a canonical tag. This way, the search engines will prioritize it for ranking

So it is not because you have two times the same content in two different places on the site that you have more chances to get more traffic. On the contrary, it hurts your SEO.

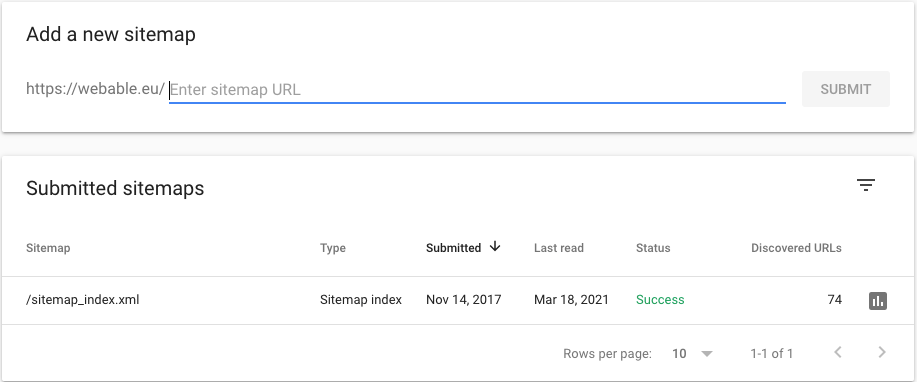

1.4 Generate the skeleton of the site with the sitemaps

The sitemap is the skeleton of your site with the urls you want to index. In the case of a WordPress site, the sitemap is automatically generated for you by the Yoast extension (mentioned in the previous article and helps a lot in everything SEO). When you use Google Search Console, you can add the sitemap url in the tool to indicate the important elements to index.

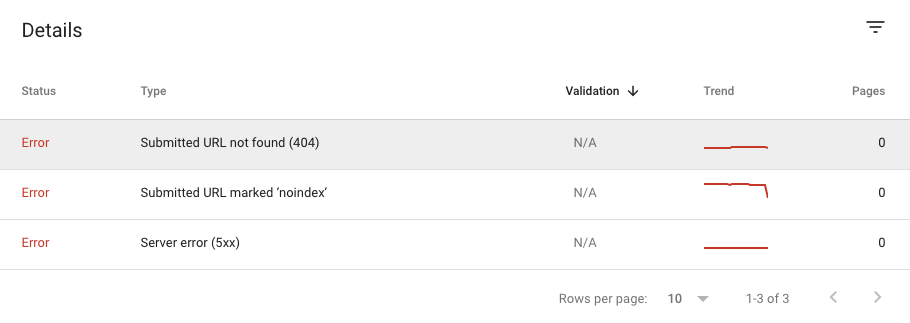

1.5 Find errors, redirects and broken links

An example of broken links is 404 pages. You may be wondering why finding these links is important. From a search engine perspective, 404 pages give the user a bad experience because the person cannot find the information they are looking for and often leaves the site directly. Having them on your site sends a message to the bots that the user experience could be affected, so in the SEO of your pages you will be penalized.

You can also do the same analysis using the Coverage report in Google Search Console.

So to see if there are errors on your site you can either use the application Screaming frog or the free tool Google Search Console (and there is no limit to the number of URLs).

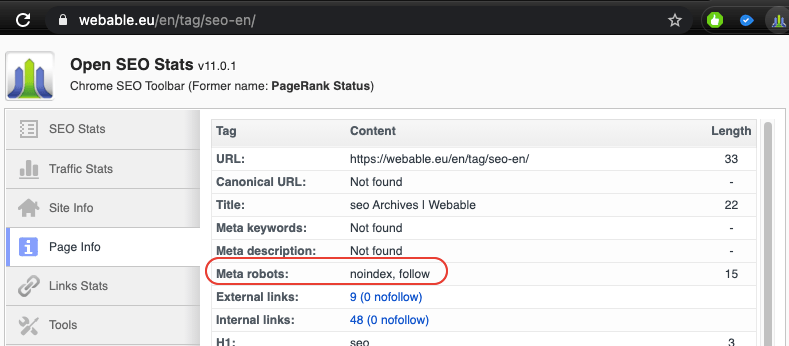

1.6 Analysis of the directives given to the robots

In the article on the Search Engine Optimization of a page, we talked about the robot.txt file that includes the pages that we do not want to index. In addition to this file there are also tags that we can put directly on the page called “meta robots”.

| Type of indexation-controlling parameters (most used) |

|

|---|---|

| Noindex | Tells a search engine not to index a page. |

| Index | Tells a search engine to index a page. Note that you don’t need to add this meta tag; it’s the default. |

| Follow | Even if the page isn’t indexed, the crawler should follow all the links on a page and pass equity to the linked pages. |

| Nofollow | Tells a crawler not to follow any links on a page or pass along any link equity. |

| Noimageindex | Tells a crawler not to index any images on a page. |

| None | Equivalent to using both the noindex and nofollow tags simultaneously. |

Case study: How to audit my site with screaming frog

In the case of my blog, after downloading Screaming frog, I launched the application to see if I have pockets of optimizations on my site. As the application uses its bot, it will really scan all urls and therefore not only pages and articles.

As my blog is built on WordPress, the spider (or bot) of the application has also scanned all the tags, categories etc.. So we arrive very quickly to the 500 URLs of the free version

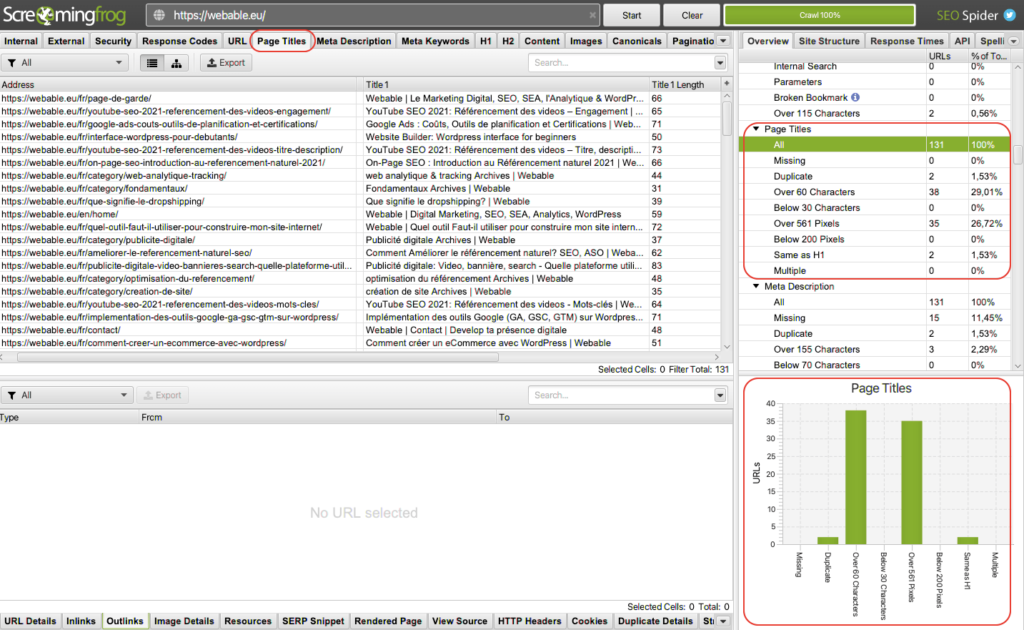

A/ Title tags Analysis

As soon as the application has finished running, we can select in the upper bar the option “Page Titles” and you will have all pages of the site with the url (“address”) and the corresponding title tag (“title 1”). In the right sidebar of the application we have some data about the “page title”.

In our case, the bot found 131 urls and within these urls 2 have duplicate content and 38 exceed 60 characters. In terms of optimization track for my site I must already make sure that the two pages with duplicate content are modified to become unique.

Then, regarding the number of characters if you remember in my previous article I had mentioned that the best practice was not to exceed 60 characters.

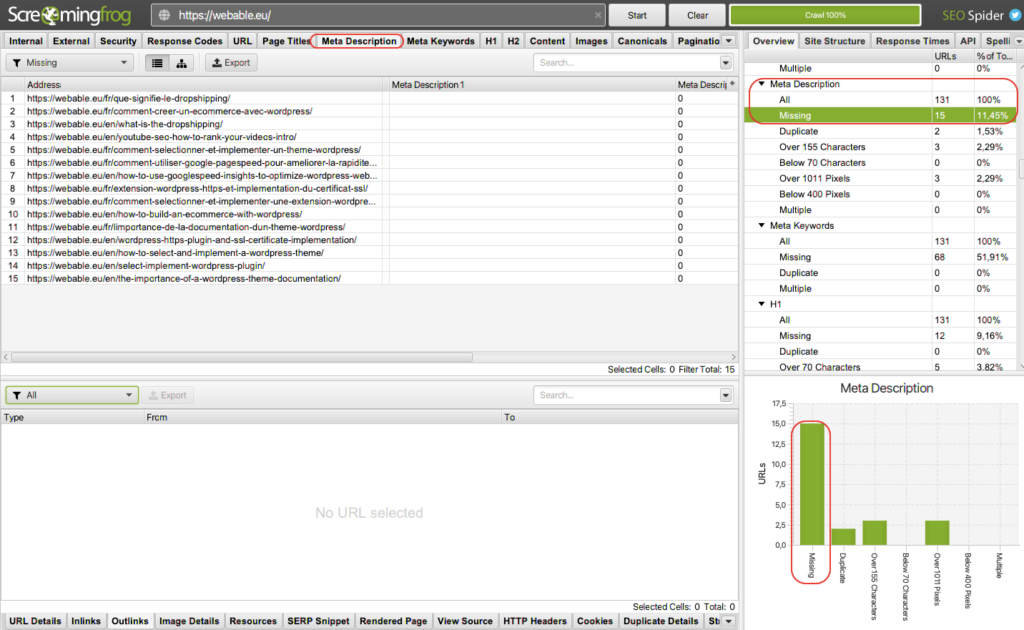

B/ Analysis of the meta description

To display the meta descriptions, in the top bar I can select “meta Description” and as for the title tag I will have in the center all urls with each time the corresponding meta description.

In the case of my blog in the right sidebar, at the data level, I noticed that I had 15 pages without meta description. By clicking on “missing” the central interface only shows the urls that do not have meta descriptions. Clicking on Meta description > Missing acts as a filter and allows me to focus only on the urls to optimize.

You can therefore for each element mentioned in the theoretical section make your audit of the site.

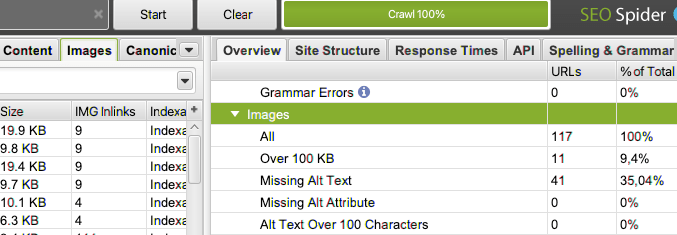

C/ Images Analysis

Often in an audit we forget to optimize images while their size can negatively impact the performance of the site. Using Screaming frog, I noticed that 9% needed to be optimised for size. In addition, I had 35% of my images that did not have an alt tag. Knowing that images are indexed in the engine and therefore a source of traffic, I exported the images in question to optimize them. In WordPress you also have extensions like “Smush” that allow you to optimize images.

Conclusion for page status analysis with Screaming frog

In summary, the Screaming Frog application is quite useful for sites that have less than 500 URLs. For large sites, you will have to upgrade to the paid version which allows you to do more in-depth analysis with the integration of Google Search Console. Nevertheless, I would like to emphasize that for a site, the free version already allows you to do a lot of analysis and to evaluate quick optimization tracks for your site.

Step 2: Analyze the experience and speed of the site

In search engine optimization, Google penalizes slow sites because it hurts the user experience. There are several tools available to identify areas for optimization.

2.1 Pagespeed Insight: Analysis of the performance of my site

This tool allows you to list all elements to improve. The tool gives a score between 0 – 100 and you can have the result for mobile and desktop. You will find a list of elements to improve. In the context of a site built on WordPress, I made an article that explains in depth the extensions you can use to increase the speed of your site.

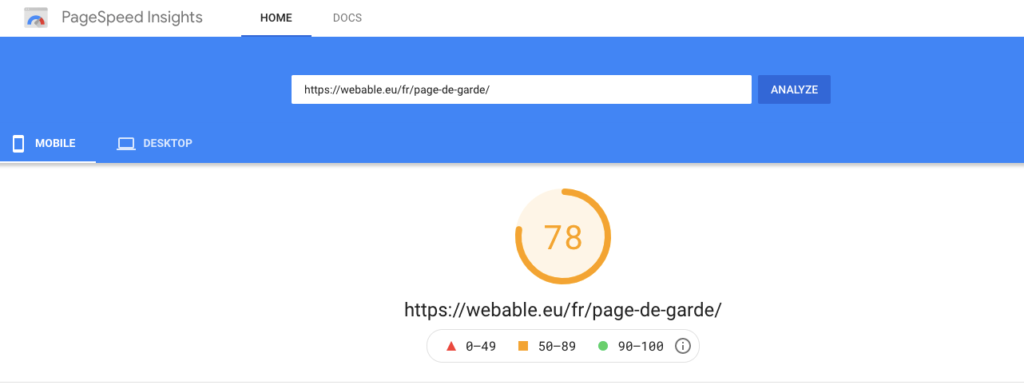

For example, in the image below a page on my site scored 78 on mobile and 97 on desktop (March 2021), which is an excellent score. The goal of this tool is not to have a 100% score, but rather to improve the speed and experience of your site as you optimize it. This will have an indirect impact on your site’s SEO.

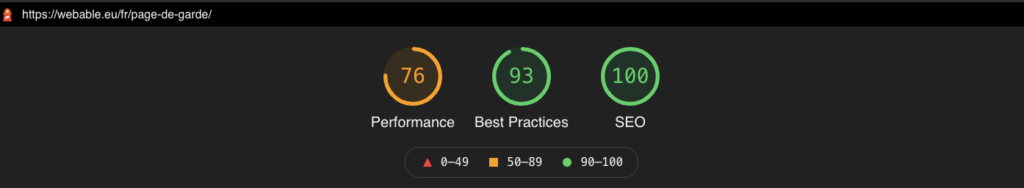

2.2 Lighthouse: Site performance analysis and SEO

There is also the lighthouse extension that allows you to audit your site, unlike the “pagespeed insight” you have two additional reports which are the “best practices” and “SEO”. The tool also gives a score between 0-100. Furthermore, the speed insight results are based on the lighthouse data (it’s the presentation that differs). In the first one a distinction is made between mobile and desktop and in the second one other information is given. From my point of view the two reports are complementary

In the same kind of report I could also name Gmetrix which also presents in a less technical way the Lighthouse data. So I advise you to test all 3 tools and choose the one that suits you best.

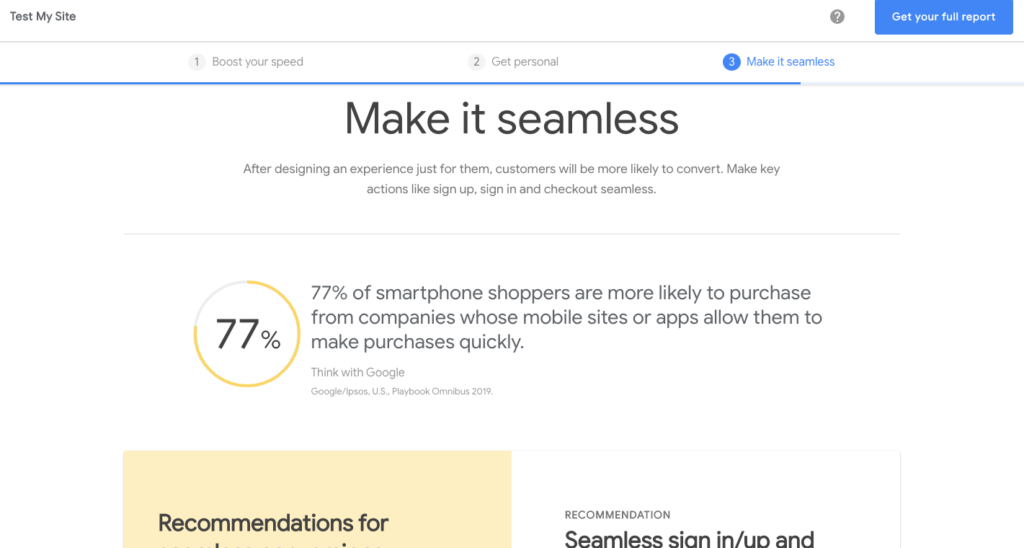

2.3 Test My Site : Analysis of a site based on the Funnel`

The last tool from Google that I would recommend is the Test My Site which focuses on mobile. The first two tools I mentioned were developed for a rather techy audience. From my point of view the “Test My Site” tool is the vulgarization of the first two by putting the cursor more on the mobile and in an e-commerce perspective. It’s not a coincidence that the tool is on the site “Think with Google” a blog where we discuss digital issues from a helicopter angle. The tool consists of three parts, “boost your speed”, “Get personal” and “Make it seamless”. Each part is introduced by text that is understandable for a non-tech person.

In summary, there are three tools from Google that I would recommend to audit your site.

- The pagespeed insight you see the opportunities for the optimizations whether on mobile or desktop;

- The extension lighthouse to see on the good practices and SEO are implemented ;

- The “Test my Site” if you have an e-commerce

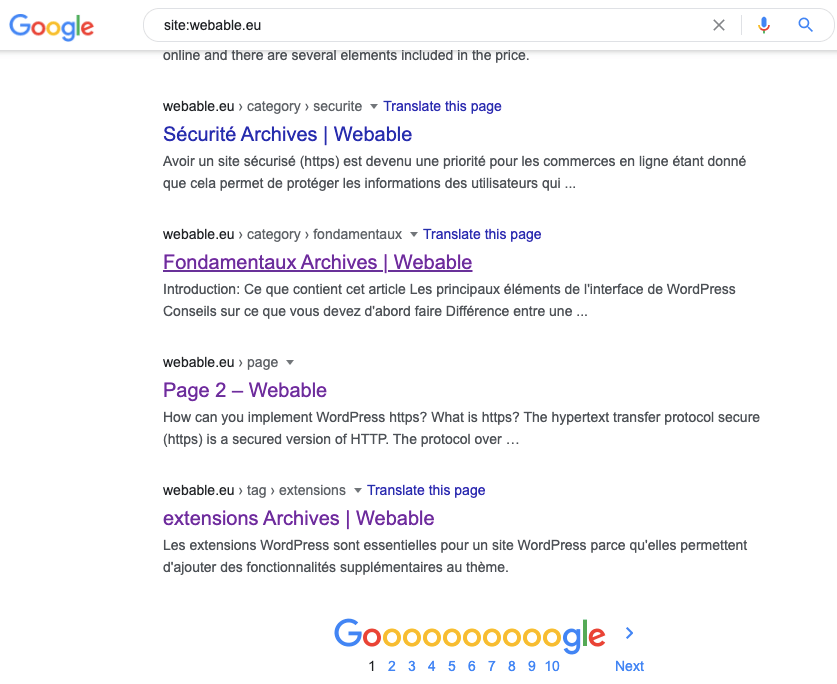

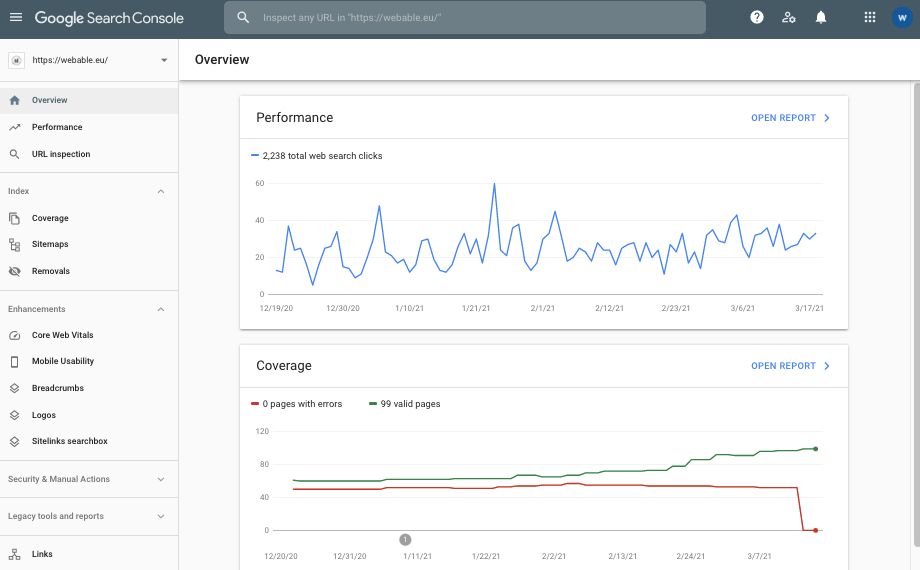

Step 3: Analyze the organic traffic: Google Search Console

Google’s free tool to analyze organic traffic is quite handy for SEO. Once you have connected the tool to your site, you can check if the lexical field that users use to arrive on your site corresponds to the positioning you want to have. For example, if I want to position myself for queries around natural referencing and I see that the queries that people use are around natural products, there is probably an optimization to be done at the level of the keywords I use in my title and description tags for example. Concerning the Google Search Console (GSC) I made an article explaining how you can use it to analyze organic traffic and much more.

Conclusion

To conclude, I recommend three pillars of audit for your site to increase the natural referencing

- Analyze the state of your site’s pages in order to understand the quick and easy ways to optimize them.

- Analyze the performance and experience of your site because it could penalize you in SEO.

- Analyze your organic traffic to check if the lexical field used by users is in line with your positioning.